AnythingLLM: The all-in-one AI app you were looking for

Chat with your docs, use AI Agents, hyper-configurable, multi-user, & no fustrating set up required. A full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use as well as supporting multi-user management and permissions.

Product Overview

AnythingLLM is a full-stack application where you can use commercial off-the-shelf LLMs or popular open source LLMs and vectorDB solutions to build a private ChatGPT with no compromises that you can run locally as well as host remotely and be able to chat intelligently with any documents you provide it.

AnythingLLM divides your documents into objects called workspaces. A Workspace functions a lot like a thread, but with the addition of containerization of your documents. Workspaces can share documents, but they do not talk to each other so you can keep your context for each workspace clean.

Some cool features of AnythingLLM

- Multi-user instance support and permissioning

- Agents inside your workspace (browse the web, run code, etc)

- Custom Embeddable Chat widget for your website

- Multiple document type support (PDF, TXT, DOCX, etc)

- Manage documents in your vector database from a simple UI

- Two chat modes

conversationandquery. Conversation retains previous questions and amendments. Query is simple QA against your documents - In-chat citations

- 100% Cloud deployment ready.

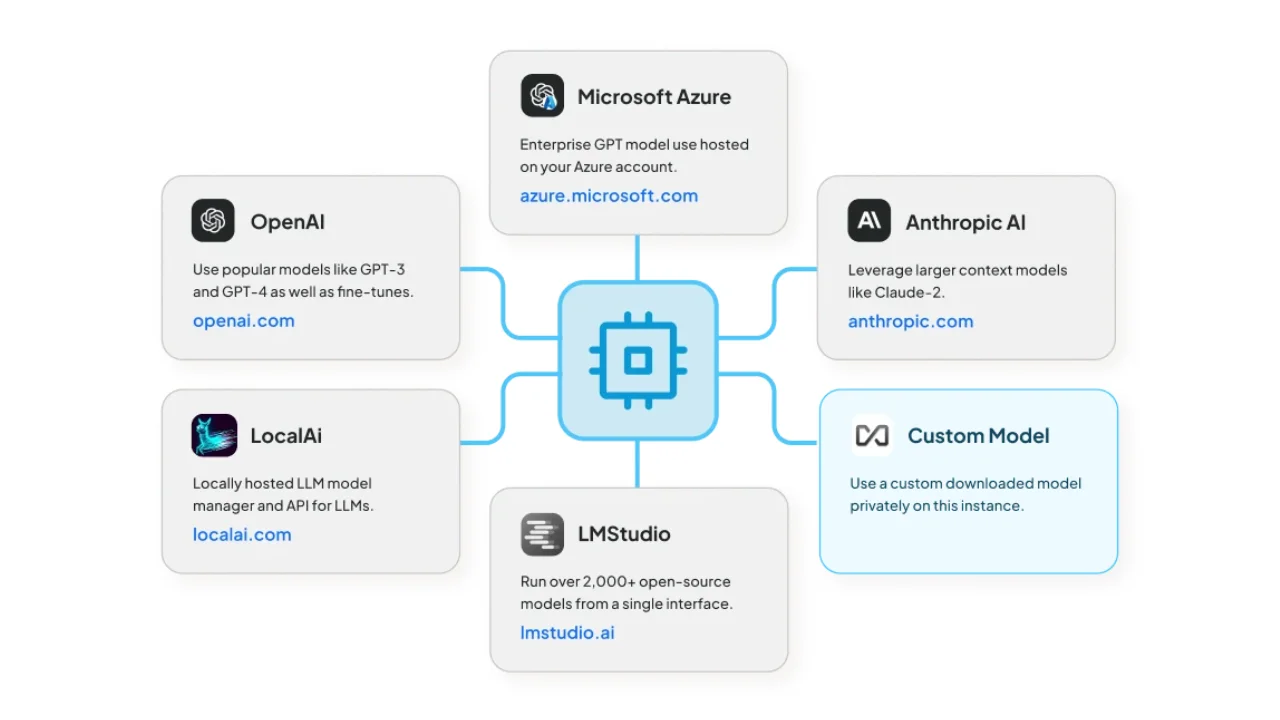

- "Bring your own LLM" model.

- Extremely efficient cost-saving measures for managing very large documents. You'll never pay to embed a massive document or transcript more than once. 90% more cost effective than other document chatbot solutions.

- Full Developer API for custom integrations!

Supported LLMs, Embedder Models, Speech models, and Vector Databases

Language Learning Models:

- Any open-source llama.cpp compatible model

- OpenAI

- OpenAI (Generic)

- Azure OpenAI

- Anthropic

- Google Gemini Pro

- Hugging Face (chat models)

- Ollama (chat models)

- LM Studio (all models)

- LocalAi (all models)

- Together AI (chat models)

- Perplexity (chat models)

- OpenRouter (chat models)

- Mistral

- Groq

- Cohere

- KoboldCPP

- LiteLLM

- Text Generation Web UI

Embedder models:

- AnythingLLM Native Embedder (default)

- OpenAI

- Azure OpenAI

- LocalAi (all)

- Ollama (all)

- LM Studio (all)

- Cohere

Audio Transcription models:

- AnythingLLM Built-in (default)

- OpenAI

TTS (text-to-speech) support:

- Native Browser Built-in (default)

- OpenAI TTS

- ElevenLabs

STT (speech-to-text) support:

- Native Browser Built-in (default)

Vector Databases:

Technical Overview

This monorepo consists of three main sections:

frontend: A viteJS + React frontend that you can run to easily create and manage all your content the LLM can use.server: A NodeJS express server to handle all the interactions and do all the vectorDB management and LLM interactions.collector: NodeJS express server that process and parses documents from the UI.docker: Docker instructions and build process + information for building from source.embed: Code specifically for generation of the embed widget.

Self Hosting

STAAS.IO & the community maintain a number of deployment methods, scripts, and templates that you can use to run AnythingLLM locally. Refer to the article below to learn how to deploy in your preferred environment or how to deploy automatically: AnythingLLM - The Quintessential AI Document Assistant

Anything LLM

The all-in-one AI app you were looking for. Chat with your docs, use AI Agents, hyper-configurable, multi-user & no fustrating set up required. A full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use as well as supporting multi-user management and permissions.

Source: Mintplex Labs